Artificial Intelligence (AI). It’s everywhere. And I’m not talking about just recently - although you can thank a certain chatbot for making it a hot topic within the cultural zeitgeist. AI has been powering the technology all around us for quite some time. It powers navigation to help provide real-time route suggestions. Among other things, AI is being used to inform ETAs for rideshare apps, power transcription technology, and filtering your emails to spare you from thousands of spam emails. However, it’s also powering more high-risk technology including autonomous vehicles and fraud detection.

When any technology is thrust into the mainstream, there are risks. The advent of the automobile was followed by the formation of the National Highway Safety Administration. New drugs go through extensive studies to be deemed viable for the masses by the Food and Drug Administration (FDA). And we’re seeing it now with AI, as calls from both tech and policy leaders to regulate AI get louder. The UK Information Commissioner’s Office recently commented on the widespread adoption of AI by urging businesses not to rush products to market without first properly assessing the privacy risks attached. Additionally, industry leaders have acknowledged the rapid pace at which this technology is evolving seen in the voluntary pact being developed by Alphabet and the European Union that would help govern the use of AI in lieu of formal legislation.

For most businesses it is unlikely that entering into a voluntary pact with the European Union will be an option, meaning you’ll have to turn to alternative measures. So, how can you approach the development of novel technologies with a defined risk-based approach? There are a number of frameworks that are currently available for you to leverage when building AI products and solutions. Such frameworks can give you the guidance and practical advice to help approach the development of these new technologies in an ethical and risk-aware manner. Industry bodies such as the National Institute of Standards and Technology (NIST) and the International Standards Organization (ISO) are just some of the organizations that have provided frameworks for AI and similar technologies. In this article we will be taking a closer look at the Organisation for Economic Co-operation and Development (OECD) Framework for the Classification of AI Systems and its companion checklist, how it compares to its NIST and ISO counterparts, and how you can approach the adoption of this framework.

What Is the OECD Framework for the Classification of AI Systems?

The OECD Framework for Classifying AI Systems is a guide aimed at policy makers that sets out several dimensions to characterize AI systems against while linking these characteristics with the OECD AI Principles. The framework defines a clear set of goals and seeks to establish a common understanding of AI systems, inform data inventories, support sector-specific frameworks, and assist with risk management and risk assessments as well as helping to further understanding typical AI risks such as bias, explainability and robustness.

The OECD AI Principles establish a set of standards for AI that are intended to be practical and flexible, these include:

Inclusive growth, sustainable development, and well-being - This principle recognizes AI as a technology with a potentially powerful impact. Therefore, it can, and should, be used to help make advances with some of the most critical goals for humanity, society, and sustainability. However, it is critical that AI’s potential to further perpetuate or even aggravate existing social biases, or imbalance of risks/negative impacts based on wealth or territory are mitigated. AI should be built to empower everyone.

Human-centered values and fairness - This principle aims to place human rights, equality, fairness, rule of law, social justice, and privacy at the center of the development and functioning of AI systems. Like the concept of Privacy by Design, it ensures that these elements are considered throughout each stage of AI lifecycle but in particular in the design stages. If this principle isn’t maintained, it can lead to infringing on basic human rights, discrimination, and can undermine public’s trust in AI generally.

Transparency and explainability - This principle is based in disclosing when AI is being used and enabling people to understand how the AI system is built, how it operates, what information is it fed. Transparency aligns with the commonly understood definition whereby individuals are made aware for the details of the processing activity, allowing them to make informed choices. Explainability focuses on enabling affected individuals to understand how the system reached the outcome. When provided with transparent and accessible information individuals can challenge the outcome more easily.

Robustness, security, and safety - This principle ensures that AI system are developed to be able to withstand digital security risks and that systems do not present unreasonable risks to consumer safety when used. Central considerations for maintaining this principle include traceability, which is focused on maintaining records of data characteristics, data sources, and data cleaning as well as applying a risk management approach along with appropriate documentation of risk-based decisions.

Accountability - This principle will be familiar to most privacy professionals. Accountability underpins the AI system’s life cycle with the responsibility to ensure that the AI system functions properly and is demonstrably aligned with the other principles.

How Does the OECD Framework Compare to Other Frameworks?

The OECD Framework for the Classification of AI Systems is not the only framework out there that focuses on establishing governance processes for the trustworthy and responsible use of AI and similar technologies. In recent years, several industry bodies have developed and released frameworks aimed at helping businesses to build a program for governing AI systems. When assessing which is the right approach for you, there are a few frameworks that should be considered and while the OECD framework can be used as to support your approach, its breadth means that it can easily supplement other available frameworks. Meaning that you won’t need to make a decision between OECD, NIST, or ISO how you can fit these frameworks together to work harmoniously. Here we will compare both NIST and ISO frameworks.

NIST AI Risk Management Framework

On January 26, 2023, NIST released the Artificial Intelligence Risk Management Framework (AI RMF) - a guidance document for voluntary use by organizations that design, develop, or use AI systems. The AI RMF is aimed at providing a practical framework for measuring and protecting the potential harm posed by AI systems by mitigating risk, unlocking opportunity, and raising the trustworthiness of AI systems. NIST outlines the following characteristics for organizations to measure the trustworthiness of their systems against:

Valid and reliable

Safe, secure, and resilient

Accountable and transparent

Explainable and interpretable

Privacy enhanced

Fair with harmful biases managed

In addition, the NIST AI RMF will provide actionable guidance across four steps – govern, map, measure, and manage. These steps aim to give organizations a framework for understanding and assessing risk as well as keeping on top of these risks with defined processes.

ISO guidance on artificial intelligence risk management

On February 6, 2023, the ISO published ISO/IEC 23894:2023 – a guidance documents for artificial intelligence risk management. Like the NIST AI RMF, the ISO guidance is aimed at helping organizations that develop, deploy, or use AI systems to introduce risk management best practices. The guidance outlines a range of guiding principles that highlight that risk management should be:

Integrated

Structured and comprehensive

Customized

Inclusive

Dynamic

Informed by the best available information

Consider human and cultural factors

Continuously improved

Again, like the NIST AI RMF, the ISO guidance defines processes and policies for the application of AI risk management. This includes communicating and consulting, establishing the context, assessing, treating, monitoring, reviewing, recording and reporting on risks attached to the development and us of AI systems and takes into account the AI system life cycle.

How do these frameworks compare to the OECD framework?

The application of the OECD framework is intended to be broader than other AI risk management frameworks and aims to help organizations understand and assess AI systems across multiple contexts – or dimensions, as they are referred to with the OECD’s framework - including:

People & Planet

Economic Context

Data & Input

AI Model

Task & Output

Unlike the NIST and ISO framework, the OECD framework promotes building a fundamental understanding of AI and related language to help inform policies within each defined context. The OECD framework also supports sector-specific frameworks and can be used in tandem with financial or healthcare-specific regulation or guidance related to AI usage. It also aims to support the development of risk assessments as well as governance policies for ongoing management of AI risk.

Both NIST and ISO AI risk management frameworks contain a deeper focus on the specific controls and requirements for auditing risk. As such, they can complement the more ‘policy-centric’ parts of the OECD framework and can help organizations further mature and operationalize their take on AI systems development and usage.

How Can Organizations Implement the OECD Framework for Classification of AI Systems?

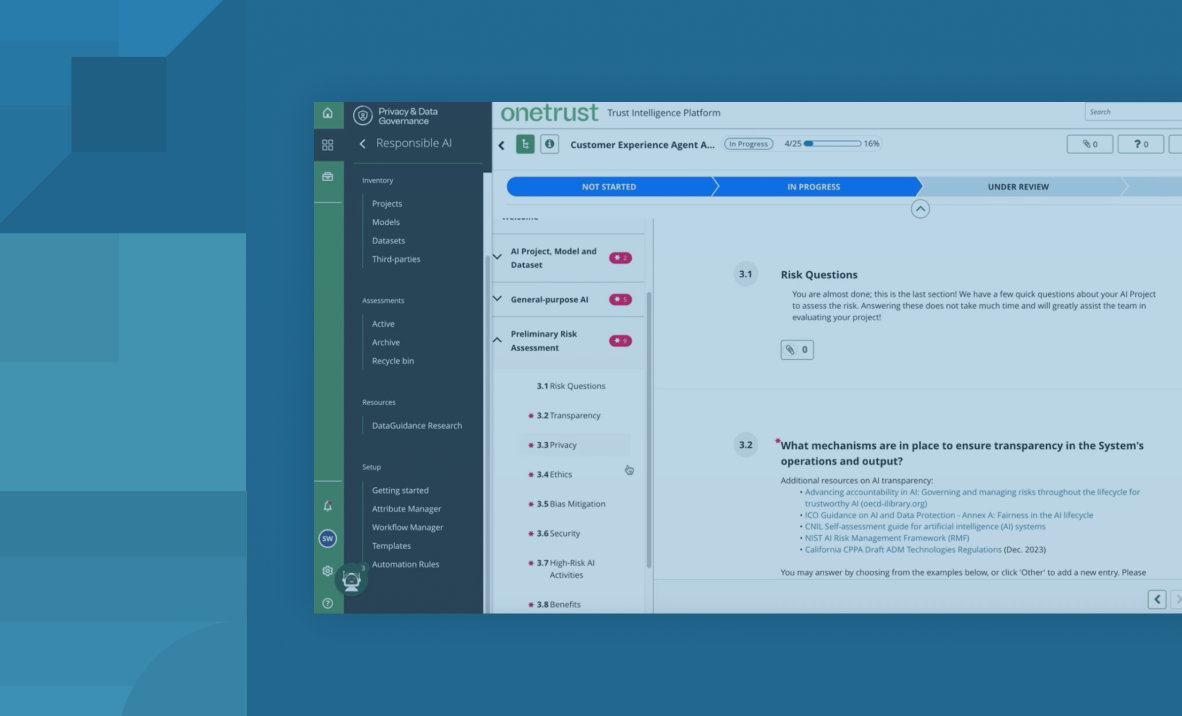

OneTrust has added a checklist template based on the OECD Framework for the Classification of AI Systems to the recently introduced AI Governance solution. The OECD AI Checklist template aims to help you evaluate AI systems from a policy perspective and can be applied to a range of AI systems across the following dimensions outlined in the OECD framework.

The checklist will also ensure that efficient triaging and policies are in place within your organization to tackle the broad range of domains and potential concerns linked to the usage of AI systems. In short: you can use the checklist to validate if there is the right set of policies in your business to address gaps in AI systems under each of the principles, there is organization and right set of owners to help manage the risks identified through this checklist and to oversee the mitigation for them, or whether all of these domains are correctly represented within the life cycle of the AI system development and/or usage.

To ensure organizations develop and deploy AI systems responsibly, OneTrust has created a comprehensive assessment template within the AI Governance tool that includes the OECD framework for classification of AI systems checklist. The OneTrust AI Governance solution is a comprehensive tool designed to help organizations inventory, assess, and monitor the wide range of risks associated with AI.

Speak to an expert today to learn more about how OneTrust can help your organizations to manage AI systems and their associated risks.