AI is reshaping how marketing operates as campaigns adjust in real time, models retrain on evolving customer behavior, and embedded agents optimize segmentation, content, and bidding continuously across channels. This acceleration creates new opportunity, yet it also exposes a structural gap between the speed of AI-driven activation and the way consent and governance programs were originally designed to function.

Governance models built around periodic reviews and static permissions struggle to keep pace with AI systems that update daily or even hourly. According to recent industry findings, 70% of organizations report that their ability to govern AI is at odds with the speed at which AI initiatives move.

For marketing leaders, this gap directly affects campaign speed, activation, and confidence in how customer data can be used to power AI-driven experiences.

When consent signals, data lineage, and AI use cases evolve faster than oversight processes, marketing operations experience friction in the form of delayed launches, suppressed audiences, and uncertainty around what data can be confidently activated. Over time, this friction compounds by slowing innovation, reducing the amount of usable data available for personalization and modeling, and limiting the performance gains AI initiatives are meant to deliver.

AI acceleration is outpacing traditional consent models

AI agents are increasingly embedded throughout the marketing ecosystem, arriving through advertising platforms, analytics tools, CRM extensions, and third-party applications that introduce autonomous decision-making into everyday workflows. These agents operate continuously, processing customer data at a scale and velocity that exceeds human review cycles.

At the same time, many consent programs still rely on point-in-time collection and channel-specific records that do not automatically synchronize across systems. When a personalization engine pulls behavioral data from a CDP, or when a predictive model retrains on historical engagement signals, marketing teams need clarity on whether those data elements were collected with the appropriate permissions for AI-driven use. Without unified governance, uncertainty slows activation and increases internal review cycles.

This shift also expands the surface area of risk. Third-party vendors frequently embed AI capabilities into tools that were originally procured for different purposes, which means customer data may be processed in new ways that require updated governance oversight. In high-growth environments where campaign experimentation is encouraged, even low-risk initiatives can evolve into higher-impact use cases that warrant deeper review.

Consent therefore becomes an operational input rather than a compliance artifact. It determines which datasets can be used for personalization, which audiences can be activated across paid media, and which AI systems can learn from customer interactions.

The ROI implications of AI misuse

Governance discussions have historically centered on fines and compliance certifications, yet AI introduces consequences that directly affect marketing performance and long-term investment value. When improperly governed data is incorporated into AI models, remediation extends beyond deleting records, because models retain learned patterns and may require retraining or full rebuilds to correct misuse.

In practical terms, this can translate into paused campaigns, delayed product launches, and reallocated budget as teams reassess data sources and revalidate consent. A single incident involving biased outputs or unclear data provenance can also trigger heightened internal controls, extending review cycles for future initiatives and reducing overall campaign velocity.

Regulatory enforcement is evolving in parallel. Beyond monetary penalties, authorities have begun issuing algorithmic disgorgement orders that require organizations to delete unlawfully obtained data along with the models trained on that data, and in some cases impose multi-year bans on specific AI use cases.

For marketing operations, these developments elevate governance from a risk management function to a revenue protection strategy, because the ability to deploy AI-driven personalization at scale becomes directly tied to the integrity of consent and data governance practices.

Operationalizing AI-Ready Governance for marketing

Traditional governance strategies rely on manual, point-in-time reviews and siloed risk assessments, whereas AI systems operate continuously and require governance that scales accordingly.

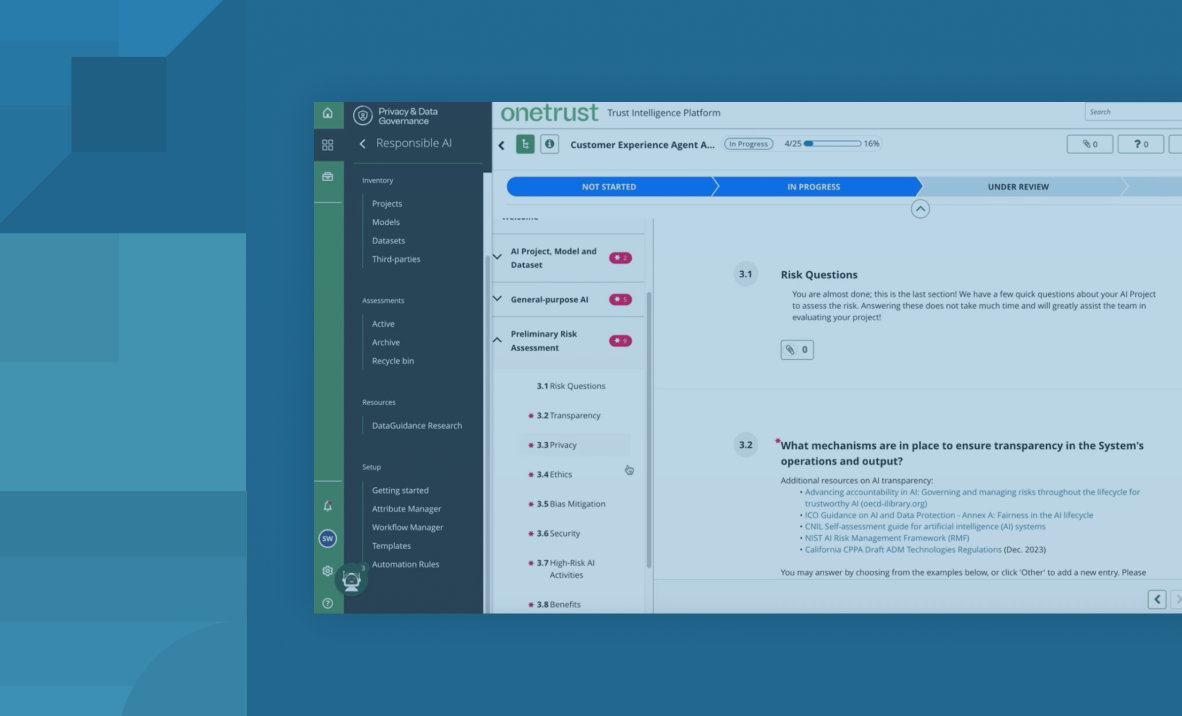

An AI-ready approach introduces three interconnected capabilities that support marketing performance: continuous context, scalable decisioning, and enforceable control.

Continuous context ensures that consent signals, regulatory obligations, data inventories, and AI use cases are connected in real time, allowing marketing teams to understand precisely which datasets are approved for which purposes. This visibility supports faster answers to practical questions about whether a propensity model can train on a particular dataset or whether a new vendor integration aligns with existing data use policies.

Scalable decisioning relies on pattern-based approvals and automated triage so that routine, low-risk marketing activities move forward efficiently, while high-risk initiatives receive focused human judgment.

This reduces administrative overhead and allows governance stakeholders to concentrate on complex scenarios that materially affect brand and customer trust.

Enforceable control translates governance decisions into programmatic guardrails embedded directly within CRM, CDP, analytics, and AI environments. Instead of relying solely on documentation or advisory memos, consent rules and data use policies are applied at the system level, preventing unpermissioned data from being activated and ensuring that AI-driven workflows remain aligned with approved use cases.

Trusted data drives measurable marketing outcomes

When consent, privacy, and AI governance are embedded into marketing workflows, the results are tangible and measurable. Teams experience faster time to activation because routine use cases are pre-approved within clear guardrails. Audience quality improves as permissioned data flows consistently across systems, reducing suppressed records and increasing usable reach. Campaign rework declines because consent signals are unified across channels rather than reconciled manually.

Industry research shows that 58% of organizations cite legal, governance, and compliance concerns as top barriers to AI adoption, which highlights the extent to which governance friction can slow innovation.

By embedding governance at the point of activation, marketing operations reduce that friction and preserve the timeline and investment assumptions behind AI-driven initiatives.

Trusted data enables personalization that performs, supports transparent customer experiences, and protects brand equity in environments where AI decisions scale rapidly. Performance and protection move together because the same consent signals that unlock activation also safeguard how data is learned from, shared, and applied.

For a deeper dive into the governance architecture that enables innovation at machine speed, explore the whitepaper The dream killer problem: Why traditional governance can’t keep up with AI.

To explore how consent is evolving in the AI era and what this means for marketing activation, download the What’s changing in consent with AI infographic.

What marketing teams should know about AI-Ready Governance