On October 30, 2023, the G7 agreed on the voluntary Code of Conduct for AI developers.

In our rapidly evolving digital world, advanced AI systems, like generative models, are becoming commonplace, heralding potential breakthroughs and, conversely, risks. Recognizing the immense impact of AI, the G7’s Code is the latest in a series of recent and upcoming developments concerning guidance around AI.

The G7's Initiative on AI

The G7 Hiroshima Artificial Intelligence Process, established at the G7 Summit in May 2023, was a decisive move to address the growing need for AI guidelines on a global scale. Born from this initiative were the Guiding Principles and the Code of Conduct, aiming to promote safety, trustworthiness, and responsible development and use of AI technologies globally. But what does this mean for organizations delving into AI? And how do these guidelines shape the future landscape of AI development and deployment?

Navigating the AI frontier: The G7 Code of Conduct

As AI technologies surge forward, the G7 has meticulously curated a list of directives to ensure that AI evolution is responsible, safe, and adherent to the highest standards. This list serves as a comprehensive blueprint for any organization delving into advanced AI systems:

1. Take appropriate measures throughout the development of advanced AI systems, including prior to and throughout their deployment and placement on the market, to identify, evaluate, and mitigate risks across the AI lifecycle.

2. Identify and mitigate vulnerabilities, and, where appropriate, incidents and patterns of misuse, after deployment including placement on the market.

3. Publicly report advanced AI systems’ capabilities, limitations, and domains of appropriate and inappropriate use, to support ensuring sufficient transparency, thereby contributing to increased accountability.

4. Work towards responsible information sharing and reporting of incidents among organizations developing advanced AI systems including with industry, governments, civil society, and academia.

5. Develop, implement, and disclose AI governance and risk management policies, grounded in a risk-based approach – including privacy policies, and mitigation measures.

6. Invest in and implement robust security controls, including physical security, cybersecurity, and insider threat safeguards across the AI lifecycle.

7. Develop and deploy reliable content authentication and provenance mechanisms, where technically feasible, such as watermarking or other techniques to enable users to identify AI-generated content.

8. Prioritize research to mitigate societal, safety and security risks, and prioritize investment in effective mitigation measures.

9. Prioritize the development of advanced AI systems to address the world’s greatest challenges, notably but not limited to the climate crisis, global health, and education.

10. Advance the development of and, where appropriate, adoption of international technical standards.

11. Implement appropriate data input measures and protections for personal data and intellectual property.

This exhaustive list not only underscores the meticulousness with which the G7 approaches AI but also illuminates the path for organizations, ensuring they harness AI's potential ethically and responsibly, placing societal welfare at the forefront.

What Does This Mean for Your Business?

The G7's move is not merely symbolic. While the Code remains voluntary, its endorsement by the G7 leaders gives it significant weight, nudging organizations to commit to its principles and actions. With comprehensive AI laws still being debated, like the EU’s draft AI Act, the Code will serve as a bridge for what’s to come.

Companies that already have AI systems in place or are planning to introduce AI into their business use cases can look to these principles as a north star when going about AI processes.

How Can OneTrust Help?

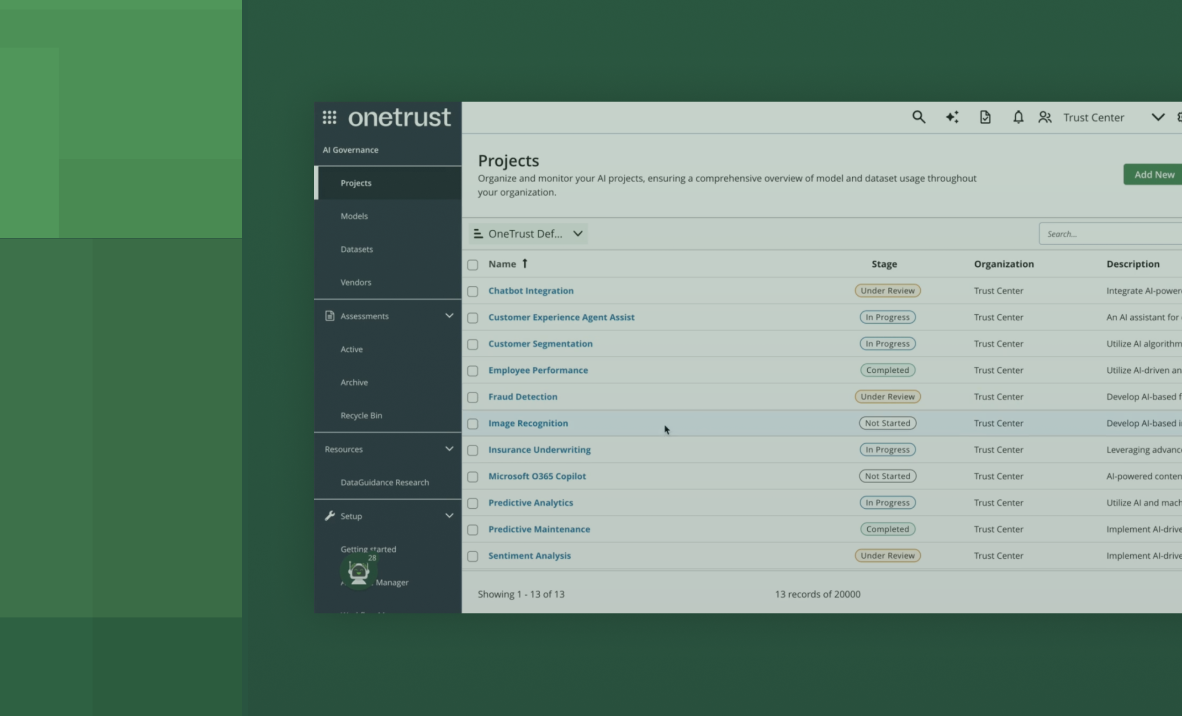

When it comes to your data and AI, the most important concern is to understand where your data is and how AI models affect it. With OneTrust AI Governance, you can maintain your inventory of AI tech across your business so you know exactly where your data touches AI systems.

You can also ensure that your AI models are tested for bias and maintain transparency with frameworks and regulations across the world. For every AI use case that comes up with your business, OneTrust enables you to have a proper governance mechanism to evaluate the risks throughout the process.

Learn more about AI Governance with OneTrust today.