Continuous Governance Is Replacing Periodic Privacy Workflows

Privacy programs were originally designed around predictable cycles. Risk assessments were scheduled. Inventories were updated periodically. Reviews happened before deployment. That model assumes stability but AI introduces constant change.

A model approved last quarter may now be using new training data. A marketing team may connect a new dataset into an existing workflow. A customer support bot may begin generating outputs that were never explicitly tested during initial review.

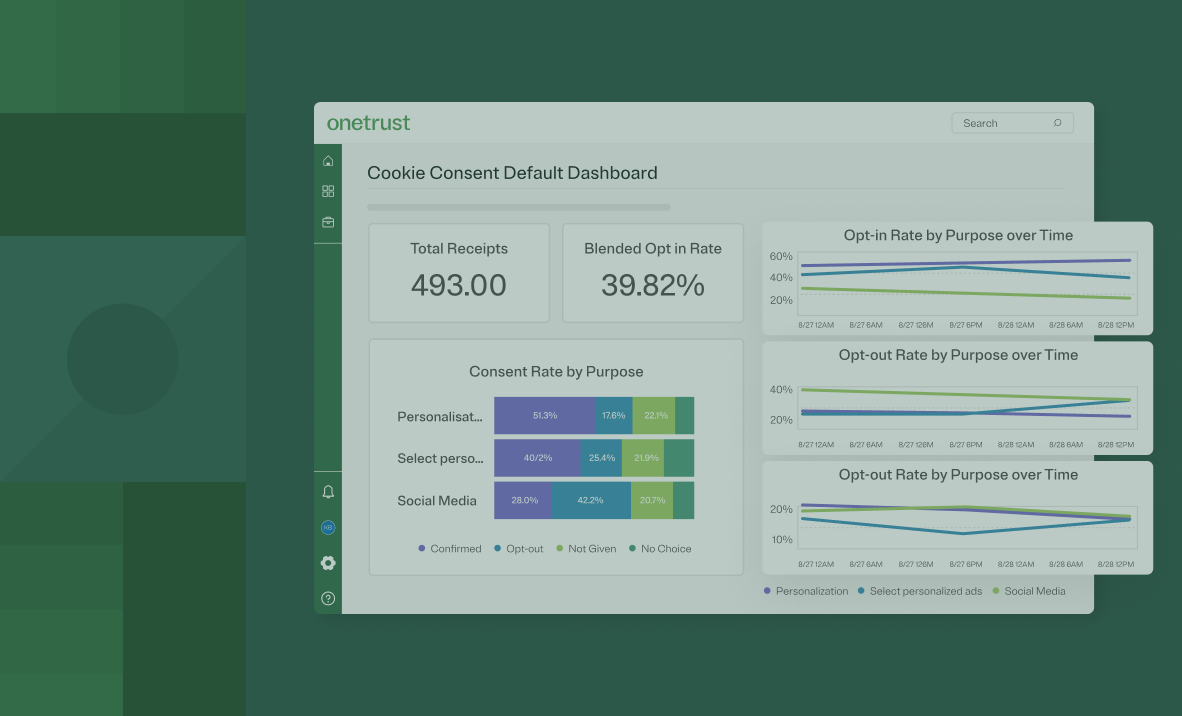

In these environments, risk does not emerge once. It evolves continuously. This is reflected in how Forrester evaluates modern platforms. Capabilities such as AI risk assessment, model management, and data pipeline oversight are no longer treated as isolated features. They are assessed based on whether they enable ongoing visibility and governance across the lifecycle.

Organizations operating at a baseline level attempt to keep pace through additional reviews. This often results in bottlenecks. A privacy team reviewing AI use cases manually may take weeks to approve a deployment, only for the underlying system to change shortly after.

Leaders approach this differently. They establish continuous visibility into how AI systems operate, including how data flows into models, how outputs behave, and how dependencies evolve. Instead of reacting to change, they monitor it. This allows them to identify risks earlier, reduce rework, and support faster decision-making across the business.

How the Forrester Wave Defines Privacy Leadership

Across these shifts, a clear pattern emerges in how leadership is evaluated. The Forrester Wave™ reflects a consistent set of capabilities that separate operational programs from manual ones:

- Continuous governance across AI and data systems

- Automated risk identification and enforcement

- Real-time evidence and auditability

- Integrated workflows across privacy, security, and data teams

These are incremental improvements that represent a different operating model, one that aligns governance with how AI systems evolve in practice.

This is exactly the model reflected in how leading platforms are evaluated and why some vendors are now emerging as category leaders.

Moving from Static Records to Audit-Ready Evidence

Documentation has long been central to privacy programs. Policies, assessments, and inventories serve as records of intent. Governance is no longer measured by documentation. It is measured by whether misuse is prevented. When regulators investigate, or when internal stakeholders evaluate AI risk exposure, the question is not whether a policy exists. It is whether the organization can demonstrate how that policy is applied in practice and whether it actively stops inappropriate data use before it occurs.

Consider a common scenario. A company receives a request to demonstrate how a specific AI-driven decision was made. In a documentation-heavy program, this often triggers a manual effort that pulls together policies, reviewing logs and reconstructing decisions across systems. Now consider a different situation. A data scientist attempts to use a dataset that includes sensitive attributes for a model that was not approved for that purpose. In a documentation-driven approach, the issue may only surface after deployment. In an operational program, controls prevent that dataset from being used in the first place, and the attempted action is logged automatically.

This shift changes both risk posture and response. Instead of relying on reconstruction after the fact, leaders generate audit-ready evidence as a byproduct of their workflows. When a risk assessment is conducted, when a control is applied, or when a decision is made, it is recorded automatically within the system. This creates a continuous, reliable record of governance activity while ensuring that misuse is addressed before it creates downstream impact.

Leading Programs Turn Policy into Enforceable System Controls

Most organizations have well-defined privacy and AI policies. The gap emerges in execution. In many environments, policies depend on individuals to interpret and apply them correctly: a data scientist decides how to use a dataset, a marketer determines whether consent applies, and a product team interprets risk thresholds.

Forrester highlights enforcement as a critical capability, particularly in areas such as AI risk management, data governance, and policy application. The distinction is not whether policies exist, but whether they can be applied consistently across systems and workflows.

Leaders reduce reliance on interpretation by embedding controls directly into operational environments. For example, instead of requiring teams to manually check whether a dataset can be used for a specific AI model, controls can automatically restrict usage based on predefined policies.

Instead of relying on teams to remember consent requirements, systems can enforce them at the point of activation. This approach ensures that governance decisions are not only defined but consistently executed.

Align Privacy, Security, Data, and AI Workflows

Privacy does not operate in isolation. AI risk spans multiple functions such as legal, security, data, engineering, and business teams. In many organizations, these operate with limited shared visibility. Information is passed between teams, often through manual coordination. Decisions take longer, and accountability becomes difficult to track.

Forrester’s evaluation places significant emphasis on integration, breadth of capabilities, and cross-functional alignment. These criteria reflect a growing expectation that governance must operate across domains, not within silos. Consider a scenario where a new AI use case is introduced. Legal reviews compliance requirements, security evaluates risks, and data teams assess inputs. Without connected workflows, these reviews happen sequentially, often with incomplete context.

This emphasis reflects a broader expectation that governance should operate as a connected system rather than a set of independent processes.